Conceptualizing our potential

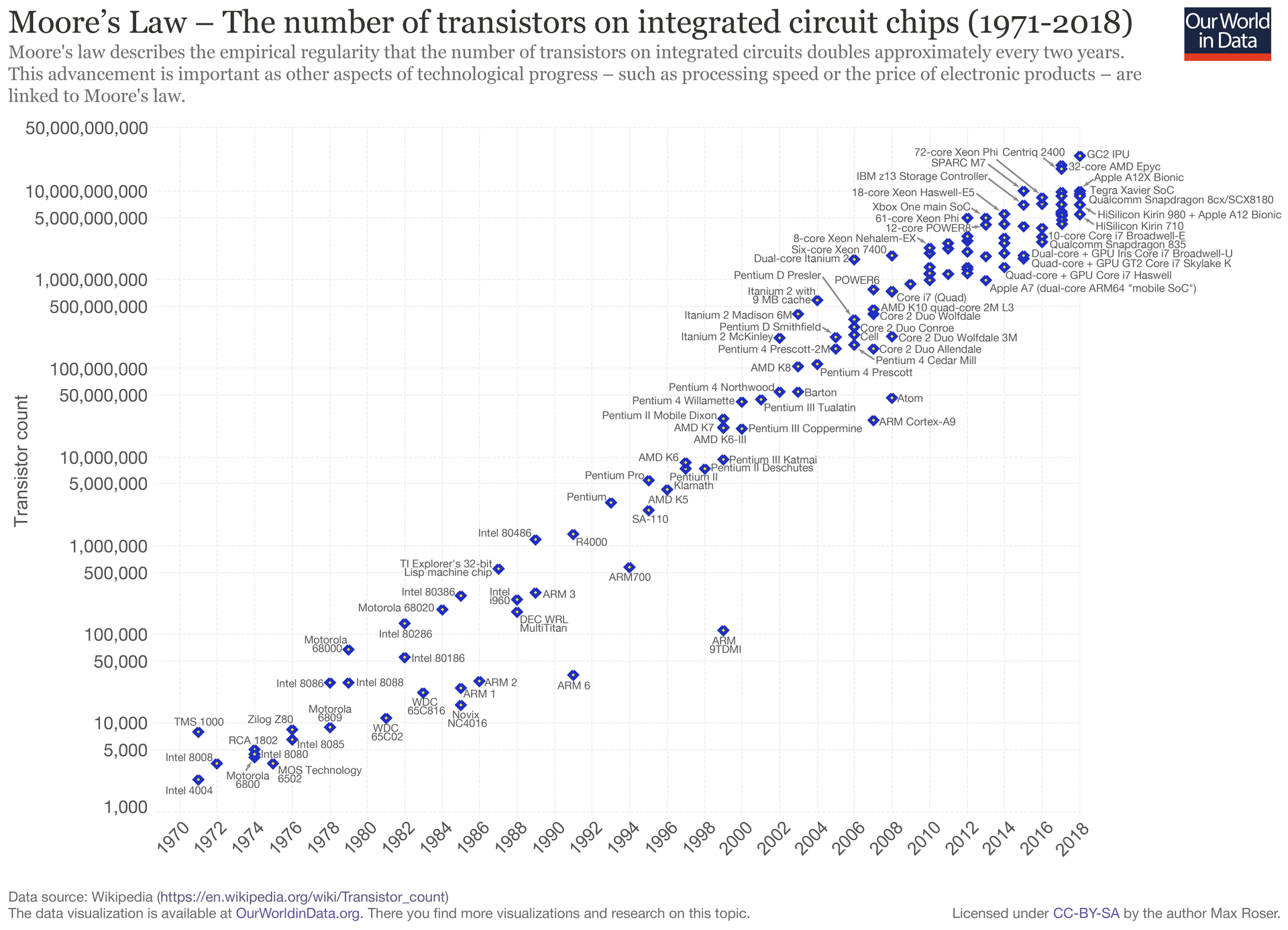

In 1965, Intel's co-founder Gordon Moore predicted that the number of components in an integrated circuit would double every year until 1975. The forecast proved remarkably accurate. In 1975, he extended his prediction at a slower rate of two years per doubling. His prescient prediction continued to hold correct until recently.

This astounding and regular progress has been one of the driving forces of the seemingly inevitable improvements in computer power and affordability that we have experienced in the past few decades. The chart below shows the increasing speed of processors over time on a log scale.

Moore based his forecast on the brief historical trends in the nascent semiconductor industry and his understanding of the manufacturing process. But in making his prediction, there was nothing preordained that the "law" should persist.

David Rotman recently recounted:

Moore wrote that "cramming more components onto integrated circuits," the title of his 1965 article, would "lead to such wonders as home computers—or at least terminals connected to a central computer—automatic controls for automobiles, and personal portable communications equipment." In other words, stick to his road map of squeezing ever more transistors onto chips and it would lead you to the promised land. And for the following decades, a booming industry, the government, and armies of academic and industrial researchers poured money and time into upholding Moore's Law, creating a self-fulfilling prophecy that kept progress on track with uncanny accuracy. Though the pace of progress has slipped in recent years, the most advanced chips today have nearly 50 billion transistors.

The prophesy's fulfillment became expected, and that expectation became destiny, in part, "because the semiconductor industry decided it would," Rotman writes. The law gave the industry a clear R&D target and a drumbeat-like cadence. Much like Apple has for years organized all of its iPhone hardware and software development around an annual product release cycle, Moore's law kept continuous pressure on semiconductor manufacturers to advance the state of the art.

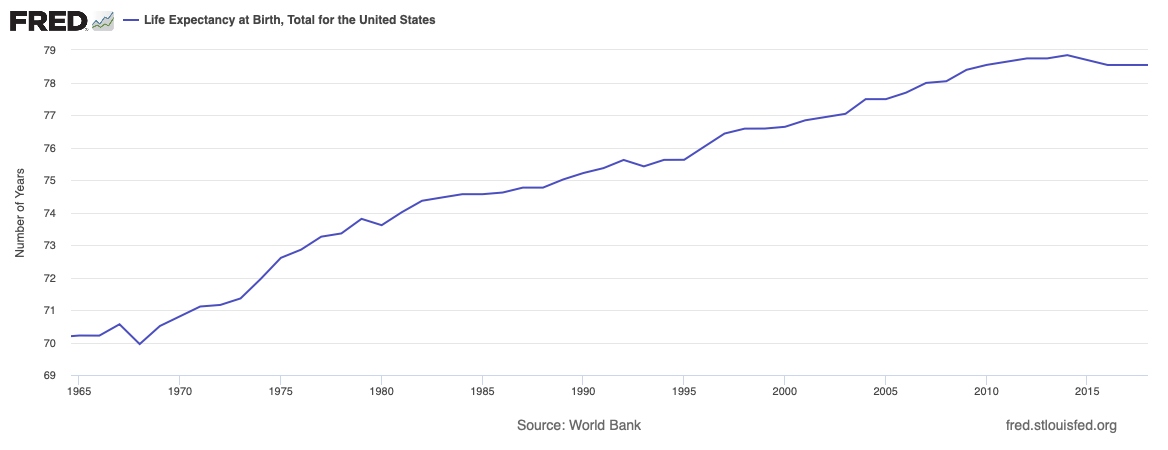

A similarly steady beat has marked progress in extending human life expectancy. Until a slight reversal after 2014, life expectancy in the US has increased an average of two years with each passing decade. Unlike for Gordon Moore's exponential growth, this growth is linear—but it has still been steady.

There is no famous "law" that has served as a drumbeat for pursuing increasing longevity. Nevertheless, these extra years of expected life are undeniable evidence of our potential to improve ourselves—against what many consider a rather important dimension of human performance.

Improving other dimensions of human performance can happen at the level of a single person and can occur much faster. Athletics provides many such examples of transcending what previously seemed to be boundaries of human potential.

In 1954, Roger Bannister became the first person to run a mile in less than four minutes. It was a goal long pursued but long out of reach. Bill Taylor described how substantial the barrier had seemed:

[R]unners had been chasing the goal seriously since at least 1886, and that the challenge involved the most brilliant coaches and gifted athletes in North America, Europe, and Australia. "For years, milers had been striving against the clock, but the elusive four minutes had always beaten them," he notes. "It had become as much a psychological barrier as a physical one. And like an unconquerable mountain, the closer it was approached, the more daunting it seemed."

Having stood as a barrier for decades, many thought Bannister would be unique in that accomplishment for some time. Instead, he quickly had company. Only 46 days later, John Landy broke the four-minute mark and Bannister's record time. A year later, three runners finished the same race in under four minutes. Over the last 50 years, more than a thousand runners have accomplished a feat that previously may have seemed superhuman (to use the contemporary phrase).

The fastest have gotten even faster still. The world record is now held by Hicham El Guerrouj, with a time of 3 minutes, 43 seconds and 13 hundredths.

These athletic feats are a powerful illustration of the potential to improve even our most basic physical capabilities. What if we could similarly enhance our cognitive capabilities?

Almost no one's livelihood depends on how fast they run. But virtually everyone's livelihood depends on how well they think. If we could each double our cognitive capabilities, we would be individually and collectively much better off.

The obvious objection is that this isn't possible. But there is good evidence that we can improve at least some of our cognitive abilities on scales much greater than a mere doubling.

For instance, there is substantial evidence that dramatic improvements in memory are possible for anyone committed to training. Journalist Joshua Foer demonstrated what is possible in his account of his memory training journey in his 2011 book "Moonwalking with Einstein."

Reigning world memory champion Ben Pridmore's ability to memorize "the precise order of 1,528 random digits in an hour" and "any poem handed to him" sparked Foer's curiosity. It burst into flames when he read Pridmore's explanation:

"It's all about technique and understanding how the memory works," he told the reporter. "Anyone could do it, really."

Alongside investigating the people who compete in memory tournaments, Foer decided to compete too. After a year of intense practice, Foer won the US Memory Championship. Along with completing various other feats, Foer memorized every card in a thoroughly shuffled deck faster than all of his competitors.

The techniques Foer learned to memorize random strings of numbers aren't the solution to learning the things that matter to us in school and work. But this narrow ability is still remarkable given humans usually can only remember "seven [items], plus or minus two." That he and others can remember lengths of higher orders of magnitude suggests the correct scale to conceptualize our cognitive abilities is much greater than we commonly think.

Consider this in the abstract: If you score 90 on some measure of performance and believe the maximum score possible is 100, then you don't have too much room for improvement. Maxing out the scale would only make you ~10% better. If it seemed hard or risky to improve, it probably wouldn't be worth it: you don't have much to gain and have plenty you could lose.

But what if your belief about the maximum score is entirely wrong? What if the maximum is actually 1,000? Then there are tremendous potential gains ahead of you.

How we conceive of our potential has significant effects. If you thought taking care of your health might give you another hundred years of life, wouldn't you act differently than if you thought it might buy you an extra decade at most?

Unfortunately, it is common to think of our cognitive abilities as if the scale only goes to 100. This view is partly related to our education system's performance, which has not generated gains that look like Moore's law or our increasing longevity. The cause could be a lack of understood and accepted measures for our cognitive abilities. Perhaps thousands of people have learned to think at a four-minute pace.

But there is no readily available data to support that narrative. Most of the available data related in some way to aggregate cognitive performance is from our education system, where the indicators are quite mixed. I won't draw any conclusions about our education system in this essay—there are both successes and failures that could be analyzed. But, notably, there is no easily accessible story of increasing capability. In inputs and hours spent, yes. But in outputs that matter, it's not so clear. Dysfunctions burden our healthcare system, but healthcare can at least tell a story of progress based on our increasing longevity.

We devote enormous resources to education, and many dedicated people have worked tirelessly to improve our education system. Yet, with perceived results failing to match the perceived effort, many people doubt that future initiatives to improve education will have much impact.

This narrative in which substantial effort translates into minimal results is even more destructive: it shrinks our perceived potential. It suggests the scale really only goes to 100.

But the performance of the memory champions suggests this is misguided. With the right techniques, they cannot only memorize a few more cards than the average person--they can memorize a few more decks of cards. It suggests that substantially more powerful cognitive abilities may be available to all of us.

Unfortunately, stories of great human achievement are often told in a way that inhibits other people rather than expanding their sense of potential. Instead of holding up people with notable accomplishments in a way that says "Look, anyone can walk this path if they want," cultural storytellers instead often spin a tale of "superhuman" ability based on prodigal "genius" or "talent." Potential progress needs to seem accessible. We only get better by being challenged, but most people will give up if the challenge seems too insurmountable.

"Superhuman" sounds impossible. Using it to describe the impressive abilities of people that are all too human is misuse. More often than not, those abilities are the product of years of deliberate practice, which is accessible to all.

There is much we don't know about how learning and intelligence work and how to optimize them. Yet the existing knowledge is tremendous compared to how much we actually apply. We could all be better learners and more cognitively capable with deliberate practice and different approaches to learning. This will require changes to how we learn at school and work and changes to the institutions in which we learn. These changes are easy to dismiss if we don't appreciate our potential.

The scale doesn't stop at 100. Our potential is greater than we realize.

This post originally appeared at Remixing Work.